Buildkite Continuous Integration

This document describes our CI setup on buildkite. This is targeted to the maintainers of the CI setup, and contributors who want to add or modify steps in the CI pipelines.

We are currently using buildkite to run build, lint, test and integration tests for all pull requests to the scionproto/scion github repository.

Overview

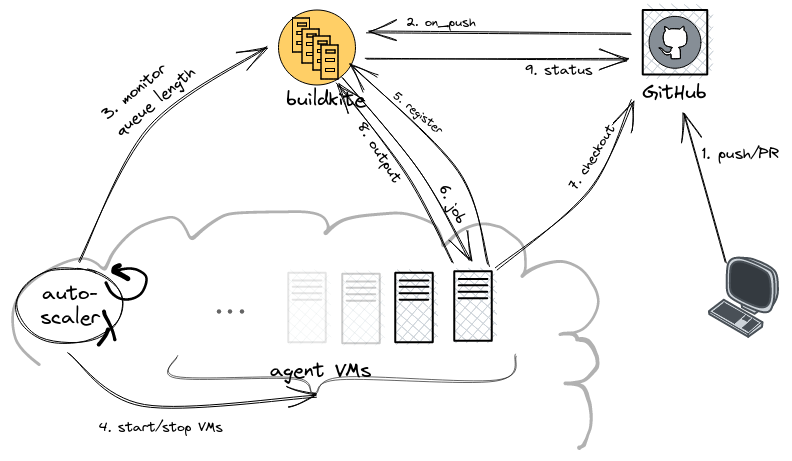

Buildkite itself orchestrates the builds, the “agents” are provided by us. We run a buildkite Elastic CI Stack for AWS autoscaling cluster of buildkite agents on AWS VMs.

Create PR or push to existing PR in scionproto/scion.

Github triggers buildkite pipeline scion. This adds the first job of the pipeline to the job queue.

The autoscaler, running in an AWS lambda, monitors the length of the job queue.

The autoscaler spins up new VMs or shrinks down the number of VMs after some idle time.

The

buildkite-agentprocess in a newly started VM registers tobuildkite.comwith the agent code for our organisation.The agent receives a job from the job queue.

Clone the github repository to the agent.

The agent runs its job and uploads the output to buildkite.

Importantly, buildkite jobs can spawn new jobs by uploading more pipeline steps (from the agent to the central buildkite service).

The first job of a pipeline is configured in the buildkite pipeline. Usually, this is a dummy step that loads or expands the pipeline config file from the repository, spawning all the jobs that will then perform the actual build tasks.

For the scion and scion-nightly pipelines, this first step is to run .buildkite/pipeline.sh in the repository to generate the full pipeline:

.buildkite/pipeline.sh | buildkite-agent pipeline upload

Steps 3./4. and 6./7./8. are then repeated until all pipeline jobs have been processed (or until the pipeline is aborted). Finally, buildkite reports the status of the build pipeline back to github.

Agents

Machine Image

We use the default machine image of the Elastic CI Stack for AWS release v6.7.1. What’s on each machine:

Amazon Linux 2023

Buildkite Agent v3.50.2

Docker v20.10.25

Docker Compose v2.20.3

AWS CLI

jq

Dependencies

Bazel, as well as additional build tools and dependencies that are not managed by bazel, are installed in the pre-command hook.

Caching

Bazel relies heavily on caching, both for fetching external dependencies as well as to avoid rebuilding or retesting identical components. For this, we run bazel-remote cache on each build agent, backed by a shared AWS S3 bucket.

Analogously, we run an athens go modules proxy on each build agent. These are also backed by a shared AWS S3 bucket.

Both of these processes are started as docker containers from the pre-command hook.

Secrets

The Elastic CI Stack for AWS has a designated S3 buckets for secrets.

In particular, this bucket contains an env script that is sourced by the buildkite agents.

The secrets to access the S3 buckets for the caches mentioned above are injected as environment variables from this env script.

See .buildkite/hooks/bazel-remote.yml

and .buildkite/hooks/go-module-proxy.yml.

Cluster configuration

Important

The agent cluster is operated by the SCION Association, in the AWS account scion-association.

Primary contact jiceatscion, alt contact nicorusti.

The agent cluster is based on the buildkite AWS CloudFormation template. Excerpt of the most relevant parameters:

# Instance Configuration:

ImageId: "" # use default machine image based on Amazon Linux 2

InstanceType: m6i.2xlarge # 8 vCPUs, 32GiB memory

RootVolumeSize: 100

RootVolumeType: gp3

BuildkiteAdditionalSudoPermissions: ALL # allow any sudo commands in pipeline

EnableDockerUserNamespaceRemap: false # not compatible with using host network namespace

# Auto-scaling Configuration:

MinSize: 0

MaxSize: 6

ScaleInIdlePeriod: 600 # shut down after 10 minutes idle

ScaleOutFactor: 1.0

OnDemandPercentage: 0 # use only spot instances